Be Lou Reed for 64 Minutes: How 3D Sound Met “Metal Machine Music”

TRIBECA, MANHATTAN/LONG BEACH, CA: No, your speakers aren’t completely messed up – that’s Lou Reed’s Metal Machine Music. Entrancing to some, physically repulsive to others, since the record’s 1975 release it’s been guaranteed to make sense to just one head on the planet: Lou Reed’s.

But what if you could be Lou Reed for a 64-minute live performance of his maniacal masterpiece? Not stuck out somewhere in the audience, but up there onstage, and not to the NYC rock pioneer’s right or left, but inside his ears? It turns out, now you can do just that.

No, this doesn’t depend on a body occupancy wormhole a la Being John Malkovich. Instead, now through April 15, California State University at Long Beach’s University Art Museum is presenting an audio installation of Reed’s Metal Machine Trio. The show provides audience members with an accurate sonic replica of Reed’s experience onstage during an April 2009 performance of the composition, with special guest John Zorn alongside, at NYC’s Gramercy Theatre.

Those who make the pilgrimage to CSULB are getting the chance to experience “Metal Machine Trio: The Creation of the Universe” exactly as it was first presented on vinyl, and again that night in 2009, with the four 16-minute segments of the piece running in a continuous loop throughout the duration of the show.

So why did Reed, and the multinational engineering firm Arup, go to such lengths to present the performance of an album called “unlistenable”, “ear-wrecking electronic sludge”, and ranked #4 on Q magazine’s “Fifty Worst Albums of All Time” list? Because Metal Machine Trio has also been hailed as a landmark album, one that made industrial music, noise rock, and modern sound art possible.

Wooing Lou Reed

Making the 3D recording and subsequently reproducing it in a public installation was a serious test for Arup’s acoustic consultants, applying the company’s extremely deep resources – including a 3D SoundLab in the heart of their TriBeCa offices – to make a unique method for Metal Machine immersion.

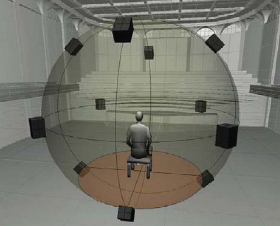

The project began when Arup’s Music, Arts and Multimedia Consultant Mike Skinner approached Reed and invited him to visit the Arup SoundLab, a facility designed by Arup’s acousticians to recreate the acoustics of any physical (or imagined) space using 3D acoustic modeling techniques. It also uses a technique to capture the acoustics characteristics of existing spaces in 3D, which can be used to recreate these spaces within the SoundLab.

“I knew Lou had been interested in 3D sound for many years,” Skinner recalls, “and I thought he would be interested in hearing what Arup had done, and also discussing my thoughts for where it could go. I was hoping that he would be sufficiently impressed that he might want to work on a project together.

“In the subsequent discussion between Lou, me, and Raj Patel, Arup’s Principal of Acoustics/Theatre Consulting Leader Americas, Lou expressed how he had never heard a live recording of a performance that sounded the way it does to him on stage. So the idea was born to amalgamate these techniques and capture the event from the performer perspective in his upcoming shows for the Metal Machine Trio.”

Lou Reed was intrigued by the chance to give audiences a listen to "Metal Machine" from his perspective. (Photo by Dave Rife, courtesy of Arup.)

Once the live show coordinates came into focus — a quick two-night stint at the intimate but roomy Gramercy Theatre on 23rd Street – Skinner and Patel started strategizing how they would record, mix and ultimately present this noteworthy content.

“We already knew that there were two ways we could present the content to listeners,” Patel says. “Either binaural, over headphones, or over a sound system capable of reproduction in full 3D ambisonics, the system used in the SoundLab.

“The first step was to carefully consider every aspect of the performance – the players, instruments, the room acoustics, and the recording equipment that would be needed. Lou met with Arup’s team at the venue and together we talked through the main elements of the performance to develop a recording concept and approach.

“We also discussed specific microphones and other equipment that Lou had used in the past and felt had produced successful sonic outcomes. Over a couple of weeks, we discussed the ways and methods to capture the performers and the room, using a range of techniques that would provide us sufficient audio to create a 3D sonic landscape back in the lab.”

3D Audio – What Makes it Different

Not surprisingly, recording for 3D audio is a different beast than it is for the traditional two-speaker playback. “Stereo recordings use panning techniques to make sounds move between the left and right channel,” explains Patel. “In good listening conditions, you can also play with level to make sounds appear to move forward or backward in the mix. When you listen to stereo recordings over headphones, when a sound moves from left to right, it does so inside your head.”

As Patel points out, in the movie business, to enhance the listener experience, techniques were developed to make sounds move around. For example 5.1 uses three front channels left-center-right as the primary channels, and uses panning to the rear two left-right channels to give a sense of movement.

“All of this happens in the horizontal plane and within the field/boundary of the loudspeakers,” Patel says. “So it is almost impossible to give a realistic sense of sound moving above or below you, or to provide a sense of depth – e.g. sound coming from 100ft away, passing you, and then going off into the distance. And it only really works in idealized listening conditions – which most people do not have access to.

According to Patel, the limitations of these systems in reproducing sound in 3D have actually been well known for many years. The fundamental mathematics for capturing sound in complete 3D, known as Ambisonics (additional information on the field can also be found at Ambisonics.net), was developed in the 1970’s, but only in the last 15 years or so has the processing power been available to start making use of it.

“Essentially the technique involves having three overlapping figure-of-eight microphones, individually capturing sound in the X [front-to-back], Y [side-to-side] and Z [floor-to-ceiling] axis at the listening location, and a W channel capturing the omnidirectional responses,” says Patel. “The captured signal type is called B-Format. With the appropriate decoding you can re-create for a listener, either within an appropriate loudspeaker array, or on headphones, the true 3d sound as would be experienced by the listener.”

Inside the SoundLab

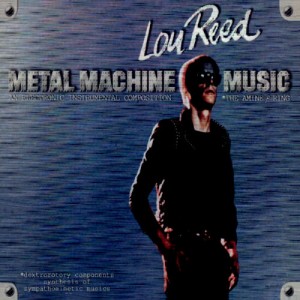

Skinner, Patel and their colleagues are aided in their understanding of multichannel audio by an extremely powerful tool at Arup’s NYC headquarters, the aforementioned SoundLab. What appears to the casual observer to simply be a square room with acoustic paneling, transforms into a transporting – but highly scientific — sonic sanctuary once the battery of speakers inside start sounding out.

The room was designed not to enable the reproduction of Lou Reed noiserock concerts, but to aid Arup in its day-to-day acoustics consulting work on the development and design of new buildings, or the refurbishing of existing ones. “In working with clients, design teams, musicians etc.., for many years we had to explain aspects about sound in words and persuade our clients to change aspects of the building to meet our acoustic goals,” Patel notes. “This can be an uphill process, because we work with architects, who are very visually inclined, and they find it hard to change this based on our expert advice alone.”

Before long, it became clear that a tool which would allow clients to listen to changes in real-time would make persuading them to change things much easier and lead to better acoustically designed-buildings. Arup started working with microphone manufacturers, hardware and software developers, research institutions, and acoustic 3D computer modeling software companies to take the concept of Ambisonics, and bring it to a live working format.

“The aim was to create a system that would allow us to go out and measure any environment in 3D and also to make computer models to predict how spaces would sound, also in 3D,” says Patel. “By calibrating real measurements to the computer models, a comprehensive 3D room acoustics database has been systematically assembled over many years. That’s how we got to the SoundLab in the mid-late 1990’s. It has revolutionized the way we work with clients, because now rather than write lengthy technical reports and discuss what things will sound like, we can simply demonstrate changes in shape, form, volume, materials, musician placement, loudspeaker selection , construction type etc will have on the sound in a space.

“It also takes the so called ‘black-magic’ out of acoustics to make it real, tangible and understandable. In addition, it has meant we can create new sonic landscapes with artists who want to push the boundaries of how their art relates to the listener.”

For a lifelong audio experimentalist like Skinner, who cut his teeth on bootstrapping musically-inclined installations created by himself and numerous arts organizations, the allure of the SoundLab was powerful enough to lure him away from a live of starving artistry.

“I had gotten to know Raj Patel, and some of the other acousticians who work for him, over the years while I was working as a freelance producer of art installations,” Skinner says. “During that time I attended several demonstrations in the SoundLab and I became increasingly excited about the possibilities it held as a new platform for creating and documenting sound works.

“When the opportunity came up for me to work at Arup in-house I jumped at it. My friends thought I was crazy for taking a ‘corporate job’, but I was, and am, convinced that new developments in music are not going to come through the ordinary studio structure anymore. With the record business in a fatal tailspin due to piracy, there’s less impetus for labels or artists to invest in new technologies. Additionally, as a producer, it discourages me to know that the mixes I work so hard on will be listened to through crappy MAC speakers for the most part.

“So it’s exciting to me to, first of all, work on music in a new and uncharted format and secondly to create site-specific works where, in addition to the audio, I can also have input or complete control over the equipment and venue where it will be heard.”

Dream Into Action: Capturing The Metal Machine Trio Live in 3D

With the Metal Machine Trio performance at hand – a show which saw Reed collaborating line with Ulrich Krieger and Saarth Calhoun, in addition to Zorn — Skinner and Patel went to work. The objective was to give future installation visitors Reed’s precise perspective of the work’s performance, as he recreated his 1975 album consisting entirely of guitar feedback played at different speeds.

The Arup team decided to place 3D Soundfield microphones, binaural dummy heads, and a variety of omni and figure-8 mics, throughout the venue and on the stage in locations that they expected would provide the most interesting audio. Reed’s front of house (FOH) engineer also took part, supplying a feed from the console. All of the tracks were captured on several standalone harddisc recorders, which Patel and Skinner then time-aligned in post production.

In a show where noise was the point, managing the peaks was of paramount concern during the recording. “The main challenge was to anticipate the maximum volumes we might experience so that we could set our gains to capture the quietest nuances, while also allowing room for the loudest moments,” Skinner states. “This is always an engineer’s job, of course, but in this case the extreme dynamic range, and the improvised nature of the piece made it more difficult to anticipate.

“We didn’t want to be riding levels in post production so as to prevent and problems with the 3D imaging, but we also wanted to capture all of the detail in the audio.”

Once the Arup’s team’s ears had recovered from a performance that the NY Times Ben Ratliff called “improvised, loud, heavily processed, and some of it ugly enough to make people leave” in his review, it was time go to work on the mix. Besides extreme levels of intentional noise, Skinner and Patel had everything from laptops, saxophones, a Continuum Fingerboard, and guitars, of course, to tame back on their Arup rig.

“I spent quite a lot of time aligning the tracks, creating different permutations of the different audio sources to see which we liked best, and putting the musicians into the proper place in the sound field,” Skinner says of the mix process. “Also, as much as we try to keep everything purely scientific, there is still a bit of subjective ‘feel’ work to be done, especially in terms of the spatialization.”

From Stage to Installation

Mixes in hand, Reed and Arup looked to the West Coast, where CSULB launched the Metal Machine Trio installation this past January 27th. Once they enter a room blacked out to the minimum allowed by code, the installation’s visitors find chairs scattered throughout the venue, where they can to sit, stand and walk around the space to explore the sounds as they wish.

“It’s like being able to run around on the stage during the concert without having to worry about tripping over cables — or Lou!” Skinner laughs. “Multiple visitors can experience the installation simultaneously – there is a limit to the amount of people in the ‘sweet spot,’ but at any point in the gallery the listener will have a slightly different perspective or experience on the audio.

“Since I was a performer for so long I’ve certainly experienced most of my shows either from onstage, or in the wings. There’s a different energy up there than in the audience, and sound comes at you from all directions, and what you hear really depends on where you’re standing on the stage. The guitarist on stage right has a very different experience than the lead vocalist or drummer, for example.

“That has changed a bit with the introduction of in-ear monitors. But as someone who has never worn those, to me, the live experience is much different, and more dimensional, than someone in the audience primarily hearing a polished, mono (or maybe stereo) mix.”

Just as important as the audio team being satisfied, Reed, one of NYC’s undisputed kings of rock, gives the CSULB installation his stamp of approval – as have the exhibit’s visitors. “Lou was very happy with the installation,” says Skinner. “He told me that ‘The only thing better than this installation was playing the show.’ So that pretty much says it all to me!

“I couldn’t be happier with Lou and the band’s reaction, as well as the audience reaction. I think the audience is really getting a unique experience getting to ‘sit’ on stage with the band during the show. I watched groups of 30 people sit on the floor, eyes closed, just bathing in the wall of sound. It was really the most satisfying experience with an audience experiencing relatively abstract audio that I can ever remember having.”

With the tough task of recreating Metal Machine’s harsh realities 3,000 miles away completed, the Arup team is naturally looking ahead to the next possible pairings of sound art with their vaunted SoundLab. After all, entirely new perspectives for experiencing music don’t emerge every day.

“I’m very hopeful that with this installation being available for visitors for such an extended period, that some of my favorite artists will drop by for a listen without me, or anyone from Arup being around, so they can decide for themselves that this is, in fact, an interesting and viable platform,” Mike Skinner says. “I would love to work on an album that has more traditional songwriting aspects. I keep thinking that someone like Mike Patton or Bjork or Grizzly Bear or Radiohead would really make use of — and help us to push — this way of listening and composing.”

— David Weiss

Please note: When you buy products through links on this page, we may earn an affiliate commission.